- Overview

- We can see now that information is what our world runs on: the blood and the fuel, the vital principle. It pervades the sciences from top to bottom, transforming every branch of knowledge. Information theory began as a bridge from mathematics to electrical engineering and from there to computing. What English speakers call “computer science” Europeans have known as informatique, informatica, and Informatik. Now even biology has become an information science, a subject of messages, instructions, and code. Genes encapsulate information and enable procedures for reading it in and writing it out. Life spreads by networking. The body itself is an information processor.

- The bit is a fundamental particle of a different sort: not just tiny but abstract—a binary digit, a flip-flop, a yes-or-no. It is insubstantial, yet as scientists finally come to understand information, they wonder whether it may be primary: more fundamental than matter itself. They suggest that the bit is the irreducible kernel and that information forms the very core of existence.

- Every new medium transforms the nature of human thought. In the long run, history is the story of information becoming aware of itself.

- In our world of ingrained literacy, thinking and writing seem scarcely related activities. We can imagine the latter depending on the former, but surely not the other way around: everyone thinks, whether or not they write. But Havelock was right. The written word—the persistent word—was a prerequisite for conscious thought as we understand it. It was the trigger for a wholesale, irreversible change in the human psyche—

- Writing in and of itself had to reshape human consciousness. Among the many abilities gained by the written culture, not the least was the power of looking inward upon itself.

- Jonathan Miller rephrases McLuhan’s argument in quasi-technical terms of information: “The larger the number of senses involved, the better the chance of transmitting a reliable copy of the sender’s mental state.”

- Language did not function as a storehouse of words, from which users could summon the correct items, preformed. On the contrary, words were fugitive, on the fly, expected to vanish again thereafter. When spoken, they were not available to be compared with, or measured against, other instantiations of themselves.

- Babbage

- Babbage invented his own machine, a great, gleaming engine of brass and pewter, comprising thousands of cranks and rotors, cogs and gearwheels, all tooled with the utmost precision. He spent his long life improving it, first in one and then in another incarnation, but all, mainly, in his mind. It never came to fruition anywhere else. It thus occupies an extreme and peculiar place in the annals of invention: a failure, and also one of humanity’s grandest intellectual achievements. It failed on a colossal scale, as a scientific-industrial project “at the expense of the nation, to be held as national property,” financed by the Treasury for almost twenty years, beginning in 1823 with a Parliamentary appropriation of £1,500 and ending in 1842, when the prime minister shut it down. Later, Babbage’s engine was forgotten. It vanished from the lineage of invention. Later still, however, it was rediscovered, and it became influential in retrospect, to shine as a beacon from the past.

- “What shall we do to get rid of Mr. Babbage and his calculating machine?” Prime Minister Robert Peel wrote one of his advisers in August 1842. “Surely if completed it would be worthless as far as science is concerned.… It will be in my opinion a very costly toy.”

- Great startups often appear useless and as "toys" early on

- Lady Ada Lovelace

- On her own she studied Euclid. Forms burgeoned in her mind. “I do not consider that I know a proposition,” she wrote another tutor, “until I can imagine to myself a figure in the air, and go through the construction & demonstration

- She listed her qualities: Firstly: Owing to some peculiarity in my nervous system, I have perceptions of some things, which no one else has; or at least very few, if any.… Some might say an intuitive perception of hidden things;—that is of things hidden from eyes, ears & the ordinary senses.… Secondly;—my immense reasoning faculties; Thirdly;… the power not only of throwing my whole energy & existence into whatever I choose, but also bring to bear on any one subject or idea, a vast apparatus from all sorts of apparently irrelevant & extraneous sources. I can throw rays from every quarter of the universe into one vast focus.

- She devised a process, a set of rules, a sequence of operations. In another century this would be called an algorithm, later a computer program, but for now the concept demanded painstaking explanation. The trickiest point was that her algorithm was recursive. It ran in a loop. The result of one iteration became food for the next. Babbage had alluded to this approach as “the Engine eating its own tail.” A.A.L. explained: “We easily perceive that since every successive function is arranged in a series following the same law, there would be a cycle of a cycle of a cycle, &c.… The question is so exceedingly complicated, that perhaps few persons can be expected to follow.… Still it is a very important case as regards the engine, and suggests ideas peculiar to itself, which we should regret to pass wholly without allusion.”

- Claude Shannon, Information Theory, Entropy

- Claude Shannon liked games and puzzles. Secret codes entranced him, beginning when he was a boy reading Edgar Allan Poe. He gathered threads like a magpie.

- Information is uncertainty, surprise, difficulty, and entropy: “Information is closely associated with uncertainty.” Uncertainty, in turn, can be measured by counting the number of possible messages. If only one message is possible, there is no uncertainty and thus no information. Some messages may be likelier than others, and information implies surprise. Surprise is a way of talking about probabilities. If the letter following t (in English) is h, not so much information is conveyed, because the probability of h was relatively high. “What is significant is the difficulty in transmitting the message from one point to another.” Perhaps this seemed backward, or tautological, like defining mass in terms of the force needed to move an object. But then, mass can be defined that way. Information is entropy. This was the strangest and most powerful notion of all. Entropy—already a difficult and poorly understood concept—is a measure of disorder

- “Shannon develops a concept of information which, surprisingly enough, turns out to be an extension of the thermodynamic concept of entropy.”

- Wiener was as worldly as Shannon was reticent. He was well traveled and polyglot, ambitious and socially aware; he took science personally and passionately. His expression of the second law of thermodynamics, for example, was a cry of the heart: We are swimming upstream against a great torrent of disorganization, which tends to reduce everything to the heat death of equilibrium and sameness.… This heat death in physics has a counterpart in the ethics of Kierkegaard, who pointed out that we live in a chaotic moral universe. In this, our main obligation is to establish arbitrary enclaves of order and system.… Like the Red Queen, we cannot stay where we are without running as fast as we can.

- The key was control, or self-regulation. To analyze it properly he borrowed an obscure term from electrical engineering: “feed-back,” the return of energy from a circuit’s output back to its input. When feedback is positive, as when the sound from loudspeakers is re-amplified through a microphone, it grows wildly out of control. But when feedback is negative—as in the original mechanical governor of steam engines, first analyzed by James Clerk Maxwell—it can guide a system toward equilibrium; it serves as an agent of stability.

- “Information can be considered as order wrenched from disorder.”

- The ability of a thermodynamic system to produce work depends not on the heat itself, but on the contrast between hot and cold. A hot stone plunged into cold water can generate work—for example, by creating steam that drives a turbine—but the total heat in the system (stone plus water) remains constant. Eventually, the stone and the water reach the same temperature. No matter how much energy a closed system contains, when everything is the same temperature, no work can be done.

- Entropy was not a kind of energy or an amount of energy; it was, as Clausius had said, the unavailability of energy.

- First law: The energy of the universe is constant. Second law: The entropy of the universe always increases.

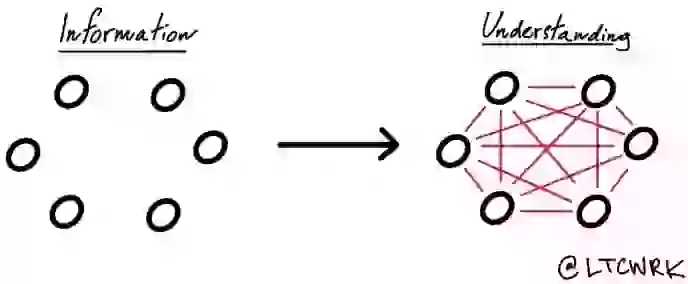

- It is not the amount of knowledge that makes a brain. It is not even the distribution of knowledge. It is the interconnectedness. When Wells used the word network—a word he liked very much—it retained its original, physical meaning for him, as it would for anyone in his time. He visualized threads or wires interlacing: “A network of marvelously gnarled and twisted stems bearing little leaves and blossoms”; “an intricate network of wires and cables.” For us that sense is almost lost; a network is an abstract object, and its domain is information. The birth of information theory came with its ruthless sacrifice of meaning—the very quality that gives information its value and its purpose.

- The common element was randomness, Chaitin suddenly thought. Shannon linked randomness, perversely, to information. Physicists had found randomness inside the atom—the kind of randomness that Einstein deplored by complaining about God and dice. All these heroes of science were talking about or around randomness.

- For McLuhan this was prerequisite to the creation of global consciousness—global knowing. “Today,” he wrote, “we have extended our central nervous systems in a global embrace, abolishing both space and time as far as our planet is concerned. Rapidly, we approach the final phase of the extensions of man—the technological simulation of consciousness, when the creative process of knowing will be collectively and corporately extended to the whole of human society.”

- Morse, Communication, and TIme

- Morse had a great insight from which all the rest flowed. Knowing nothing about pith balls, bubbles, or litmus paper, he saw that a sign could be made from something simpler, more fundamental, and less tangible—the most minimal event, the closing and opening of a circuit. Never mind needles. The electric current flowed and was interrupted, and the interruptions could be organized to create meaning. The idea was simple, but Morse’s first devices were convoluted, involving clockwork, wooden pendulums, pencils, ribbons of paper, rollers, and cranks. Vail, an experienced machinist, cut all this back. For the sending end, Vail devised what became an iconic piece of user interface: a simple spring-loaded lever, with which an operator could control the circuit by the touch of a finger. First he called this lever a “correspondent”; then just a “key.” Its simplicity made it at least an order of magnitude faster than the buttons and cranks employed by Wheatstone and Cooke. With the telegraph key, an operator could send signals—which were, after all, mere interruptions of the current—at a rate of hundreds per minute.

- Undaunted, newspapers could not wait to put the technology to work. Editors found that any dispatch seemed more urgent and thrilling with the label “Communicated by Electric Telegraph.” Despite the expense—at first, typically, fifty cents for ten words—the newspapers became the telegraph services’ most enthusiastic patrons.

- The relationship between the telegraph and the newspaper was symbiotic. Positive feedback loops amplified the effect. Because the telegraph was an information technology, it served as an agent of its own ascendency.

- The most fundamental concepts were now in play as a consequence of instantaneous communication between widely separated points. Cultural observers began to say that the telegraph was “annihilating” time and space. It “enables us to send communications, by means of the mysterious fluid, with the quickness of thought, and to annihilate time as well as space,” announced an American telegraph official in 1860.

- Formerly all time was local: when the sun was highest, that was noon. Only a visionary (or an astronomer) would know that people in a different place lived by a different clock. Now time could be either local or standard, and the distinction baffled most people. The railroads required standard time, and the telegraph made it feasible.

- Ultimately, fast long-distance messaging was what made synchronization possible—not the reverse.

- The difficulty of forming a clear conception of the subject is increased by the fact that while we have to deal with novel and strange facts, we have also to use old words in novel and inconsistent senses. A message had seemed to be a physical object. That was always an illusion; now people needed consciously to divorce their conception of the message from the paper on

- The Morse system of dots and dashes was not called a code at first. It was just called an alphabet: “the Morse Telegraphic Alphabet,” typically. But it was not an alphabet. It did not represent sounds by signs. The Morse scheme took the alphabet as a starting point and leveraged it, by substitution, replacing signs with new signs. It was a meta-alphabet, an alphabet once removed. This process—the transferring of meaning from one symbolic level to another—already had a place in mathematics. In a way it was the very essence of mathematics. Now it became a familiar part of the human toolkit.

- One reason for these misguesses was just the usual failure of imagination in the face of a radically new technology. The telegraph lay in plain view, but its lessons did not extrapolate well to this new device. The telegraph demanded literacy; the telephone embraced orality. A message sent by telegraph had first to be written, encoded, and tapped out by a trained intermediary. To employ the telephone, one just talked. A child could use it. For that very reason it seemed like a toy. In fact, it seemed like a familiar toy, made from tin cylinders and string. The telephone left no permanent record. The Telephone had no future as a newspaper name. Business people thought it unserious. Where the telegraph dealt in facts and numbers, the telephone appealed to emotions. The new Bell company had little trouble turning this into a selling point.

- Queen Victoria installed one at Windsor Castle and one at Buckingham Palace (fabricated in ivory; a gift from the savvy Bell). The topology changed when the number of sets reachable by other sets passed a critical threshold, and that happened surprisingly soon. Then community networks arose, their multiple connections managed through a new apparatus called a switch-board.

- Godel

- A cleaner formulation of Epimenides’ paradox—cleaner because one need not worry about Cretans and their attributes—is the liar’s paradox: This statement is false. The statement cannot be true, because then it is false. It cannot be false, because then it becomes true. It is neither true nor false, or it is both at once.

- Thus Gödel showed that a consistent formal system must be incomplete; no complete and consistent system can exist.

- No sooner did Gödel’s paper appear than von Neumann was presenting it to the mathematics colloquium at Princeton. Incompleteness was real. It meant that mathematics could never be proved free of self-contradiction. And “the important point,” von Neumann said, “is that this is not a philosophical principle or a plausible intellectual attitude, but the result of a rigorous mathematical proof of an extremely sophisticated kind.”

- Other

- Brenner was in a thoughtful mood, drinking sherry before dinner at King’s College. When he began working with Crick, less than two decades before, molecular biology did not even have a name. Two decades later, in the 1990s, scientists worldwide would undertake the mapping of the entire human genome: perhaps 20,000 genes, 3 billion base pairs. What was the most fundamental change? It was a shift of the frame, from energy and matter to information. “All of biochemistry up to the fifties was concerned with where you get the energy and the materials for cell function,” Brenner said. “Biochemists only thought about the flux of energy and the flow of matter. Molecular biologists started to talk about the flux of information. Looking back, one can see that the double helix brought the realization that information in biological systems could be studied in much the same way as energy and matter.…

- A part of Dawkins’s purpose was to explain altruism: behavior in individuals that goes against their own best interests. Nature is full of examples of animals risking their own lives in behalf of their progeny, their cousins, or just fellow members of their genetic club. Furthermore, they share food; they cooperate in building hives and dams; they doggedly protect their eggs. To explain such behavior—to explain any adaptation, for that matter—one asks the forensic detective’s question, cui bono? Who benefits when a bird spots a predator and cries out, warning the flock but also calling attention to itself? It is tempting to think in terms of the good of the group—the family, tribe, or species—but most theorists agree that evolution does not work that way. Natural selection can seldom operate at the level of groups. It turns out, however, that many explanations fall neatly into place if one thinks of the individual as trying to propagate its particular assortment of genes down through the future. Its species shares most of those genes, of course, and its kin share even more. Of course, the individual does not know about its genes. It is not consciously trying to do any such thing. Nor, of course, would anyone impute intention to the gene itself—tiny brainless entity. But it works quite well, as Dawkins showed, to flip perspectives and say that the gene works to maximize its own replication.

- Monod proposed an analogy: Just as the biosphere stands above the world of nonliving matter, so an “abstract kingdom” rises above the biosphere. The denizens of this kingdom? Ideas. Ideas have retained some of the properties of organisms. Like them, they tend to perpetuate their structure and to breed; they too can fuse, recombine, segregate their content; indeed they too can evolve, and in this evolution selection must surely play an important role. Ideas have “spreading power,” he noted—“infectivity, as it were”—and some more than others. An example of an infectious idea might be a religious ideology that gains sway over a large group of people.

- Redundancy—inefficient by definition—serves as the antidote to confusion. It provides second chances. Every natural language has redundancy built in

- The alphabet spread by contagion. The new technology was both the virus and the vector of transmission. It could not be monopolized, and it could not be suppressed.

What I got out of it

- Deep background and exploration on information, information theory, communication, and technology and how it came to be. Can get most of the book out of this TED talk