Summary

Dobelli lays out some of the most common and disastrous mental biases we are susceptible to. The cognitive biases and errors we make have been made by every generation for hundreds of years. Learning how to spot and eventually mitigate these risks can have great benefits for our lives, relationships and decision making

The Rabbit Hole is written by Blas Moros. To support, sign up for the newsletter, become a patron, and/or join The Latticework. Original Design by Thilo Konzok.

- The fallacies laid out here is by no means complete

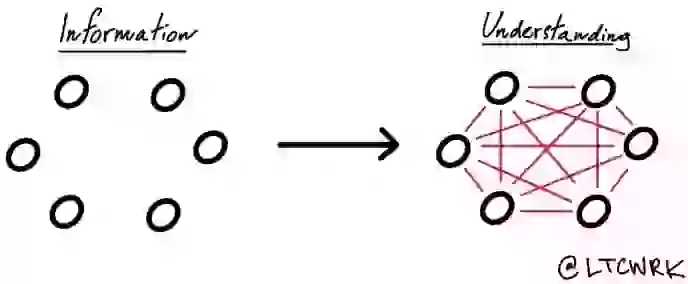

- Recognize that many of these fallacies are interconnected and play off each other. Lessening one also often improves others

- Some cognitive errors are necessary for living a happy, normal life so we don't want to remove every fallacy. Removing most, however helps avoid must large, stupid mistakes - less irrationality

- Survivorship bias - so easy to ignore failures and think odds of success much higher than reality. Guard against it by continuously studying 'graveyards'

- Swimmer's body illusion - Body a result of selection, not a result of swimming. Don't fail to recognize factor of selection for results

- Clustering illusion - Brain seeks patterns and rules and simply invents them if can't find any. Don't fall into trap of seeing patterns when there are none

- Social proof - Herd instinct, causes us that the more people doing something the better of an idea it is - making it likely we follow suit. The evil behind investment bubbles, cults and more

- Sunk cost fallacy - don't keep on doing something just because you have already sunk a lot of time, money, energy or love into it

- Reciprocity - Beware free gifts

- Confirmation bias - skewing new information so it fits what we already believe. Must constantly search for disconfirming evidence which is one of the hardest things to do (Darwin the master)

- Authority bias - Tend to blindly follow authority figures

- Contrast effect - Have difficulty with absolute judgments as we tend to always compare to something else. People awful at noticing small, gradual changes

- Availability bias - don't think that examples that are most likely to come to mind are necessarily correct or most telling. We think dramatically, not quantitatively. People tend to prefer wrong information to no information (map is not the terrain)

- It'll get worse before it gets better fallacy - A form of confirmation bias - upside for consultant either way (right if things stay bad or customer happy if things improve)

- Story bias - Stories simplify and distort reality as we build meaning into things only after the fact. Narratives often irrelevant but we find them irresistible. Be very aware of story teller's intentions and incentives (you are often the story teller)

- Hindsight bias - keeping a journal helps keep you honest. All seems clear in retrospect

- Overconfidence effect - people are systematically overconfident in forecasts, knowledge, predictions and decisions on a massive scale. Experts suffer even more than laymen

- Chauffeur knowledge - do you truly understand something or simply surface? (Planck and chauffeur). True experts delineate their circle of competence and stick in it

- Control illusion - thinking we can sway an outcome when we can't

- Incentive super response tendency - beware what you incentivize! Rat breeding example. People respond to incentives themselves and not the grander intentions behind them. Good incentive systems think of both intent and reward

- Regression to the mean

- Outcome bias - Never judge a decision by its outcome, rather judge the process

- Paradox of choice - less is more. Good enough is the new optimal

- Liking bias - will help or buy more from people we like, more similar the more we like them

- Endowment effect - liking something more merely because we own it

- Coincidence - most underestimate the role of chance in our lives

- Groupthink - reckless decisions made because social proof gets people to agree when they otherwise would not. In a tight group, speaking your mind even more important

- Neglect of probability - react to size or danger of event rather than likelihood of it happening. No intuitive grasp of risk

- Scarcity error - people more highly value what is scarce. Focus only on price and benefits

- Base rate neglect

- Gamblers fallacy - dice do not have memory, play the probability

- Anchoring effect

- Induction - drawing universal conclusions from individual observations

- Loss aversion

- Social loafing - individual effort and accountability decrease as we become one with the crowd. Smaller teams tend to be more effective

- Exponential growth - people cannot gasp the power of exponential growth

- Winner's curse - highest bidders win but typically pay too much so lose. Competition and ambiguity of true value of things cause this

- Fundamental attribution error - Overestimate individual's influence and underestimate the environment's

- False causality - mistaking correlation, effect or coincidence for causality

- Halo effect - a single bright characteristic makes everything else seem better

- Alternative paths - all the outcomes that could have happened but didn't. Don't contemplate invisible or missing outcomes or info as much as we should

- Forecast illusion - people horrible at predictions, even experts

- Conjunction fallacy - when we think a subset seems larger than the entire set. We all have soft spots for plausible stories

- Framing - information is perceived differently depending on how it is presented

- Action bias - people want to look active even if it accomplishes nothing, accentuated in new situations or where you're unsure

- Omission bias - inaction seems more admissible than action even if both lead to the same outcome

- Self serving bias - attribute success to ourselves and luck to others'

- Hedonic treadmill - we always recalibrate happiness and sadness to our situation. Avoid negative things you can't get accustomed to, expect only short term happiness from material things, get as much free time, autonomy and deep relationships as possible

- Self selection bias

- Association bias - seeing connections where none exist

- Persian messenger syndrome

- Beginner's luck - regression to mean always brings you back down. True skill lies in outperformance over long periods of time

- Cognitive dissonance

- Hyperbolic discounting - desire for immediate gratification causes us to make bad decisions for our long term interests

- Because justification - people accept reasons even if they don't explain everything

- Decision fatigue - decide better when you decide less

- Contagion bias - things can get a negative connotation simply through association

- Problem with averages - often mask underlying distribution. Don't cross a river which is on average 4 ft deep. Beware things which follow power laws (when extreme outliers dominate like Bill Gates' wealth)

- Motivation crowding - surprisingly small monetary incentives crowd out other incentives (volunteering feels less good if we are compensated). Bonuses help more in jobs where people don't get intrinsic fulfillment

- Twaddle tendency - excessive words hides lazy thinking or poor understanding. Jabber disguises ignorance

- Will Rogers phenomenon - accounting type illusions which make situations seem better but actually add no value

- Information bias - the delusion that more information helps us make better decisions

- Effort justification - overvalue things you put a lot of effort into (Ikea effect)

- Law of small numbers - much larger fluctuations with small numbers

- Expectations - raise expectations for self and those you love and lower it for things you can't control

- Simple logic - scrutinize even simple sounding problems more closely

- Forror effect - why pseudoscience works so well, very general or flattering statements most people want to associate with

- Volunteer's folly - giving your time is often not the most effective way to volunteer as using your skill to earn money and donate to a cause or to those who can perform the needed skill more aptly is often a better way to give

- Affect heuristic - emotional reactions determine risks and benefits, rather than expected value and probabilities. Substituting how we feel rather than what do I think

- Introspection illusion - internal reflection is not reliable and become overconfidence in our beliefs, nothing more convincing than own beliefs; become your own toughest critics

- Boat burning effect - remove options in order to become all in. Options and more choices have hidden costs and diminishes will power. Invert in order to determine what to avoid

- Neomania - new things always seem to shine brighter. Rule of thumb - whatever has survived for X years will survive for another X years

- Sleeper effect - forget source of information but remember message and how it made us feel. Don't accept any unsolicited advice, avoid ads, remember source of all info you get

- Alternative blindness - fail to compare your best alternative to next best alternative(s). Consider all alternatives

- Social comparison bias - tendency to withhold assistance from people who might outdo you even if you'll look like a fool in the long run (hire people who are better than you)

- Primacy and recency effects

- Not invented here syndrome - tendency to fall in love with our own ideas

- Black swan - unthinkable events which affect every aspect of your life; profit from the unthinkable by trying to catch a positive back swan (entrepreneur or inventor or build something which scales) and avoid negative black swans by giving yourself margin in every aspect of your life

- Domain dependence - insights do not pass well from one field to another, especially from theoretical to practical

- False consensus effect - frequently overestimate the popularity in the general public of things we like

- Falsification of history - remove wrong past assumptions so you think you were right all along; adjust past views to present views. Safe to assume half of what you remember is wrong

- In group / out group bias - even small similarities can cause in group bias and anyone outside is a potential enemy

- Ambiguity aversion - difference between risk and ambiguity is that with ambiguity the probabilities of outcomes are unknown. Can make calculations knowing risk but not with uncertainty

- Default tendency - status quo bias, cling to way things are even if not the best option

- Fear of regret - those who don't follow the crowd tend to feel more regret and therefore tend to act more conservatively. Last chances envoke panic

- Salience effect - outstanding features get much more attention than they deserve, can lead to prejudice and changes how we interpret the past and how we act, avoid jumping to the easiest conclusions

- House money effect - we spend and think about money differently depending on how we got it

- Procrastination - Self control drains will power - eliminate distractions, set self imposed deadlines for yourself, refuel your batteries

- Envy - most destructive sin as it is no fun in any way, different from jealousy as jealousy requires at least 3 people, tend to envy people similar to us, stop comparing self to others, determine circle of competence and work on mastery, be only envious of the person you want to become

- Personification - we empathize with other people but less so if we can't see them or don't know them, statistics don't stir us but people do

- Illusion of attention - tend to only see what we focus on and miss everything else, think the unthinkable, try to spot the Black swans, Pay attention to silences as much as noises

- Strategic misrepresentation - exaggerate self or promises in order to achieve some goal, look at past performance and do a cost/benefit analysis to protect self from this

- Overthinking - paralysis by analysis, use your emotions and intuition strategically with simple matters or areas your are highly skilled in but use your reasoning for more complex matters

- Planning fallacy - people take on too much and is even worse in groups, we are not natural planners and underestimate role of outside events, use pre mortems

- Man with a hammer syndrome - locate shortcomings and try to add tools (mental models) to aid you in your life, thinking and decision making

- Zagarnik effect - seldom forget uncompleted tasks but immediately forget what we've finished, outstanding tasks gnaw at us until we have a clear and detailed view of how we will accomplish them, create step by step instructions with detail to complete tasks

- Illusion of skill - luck plays a bigger role than skill, in some areas skill plus almost no role

- Future positive effect - missing information much harder to appreciate than what is present, have problems perceiving non events and the absence of things

- Cherry picking - selecting and showcasing only the best characteristics and hiding or not mentioning the rest. Always ask about the failures and try to notice what is missing or not mentioned

- Fallacy of the single cause - no single factor causes any event but people want a single cause to explain an event

- Intention to treat error - failed events show up (unlike in survivorship bias) but in the wrong category. Always try to determine if failed events are not included in the study

- News - makes people well informed but ignorant, harmful in the long run

- Negative knowledge or knowing what to avoid is much more important than positive knowledge or knowing what to do - via negativa. Eliminate the downside and the upside will take care of itself

- Today's world, unlike our ancestor's, rewards deep thinking and independent action. Due to our biology, this is very difficult

- A really complete and informative book which details some of the most common heuristics and mental biases which lead us to poor decisions or faulty thinking

In the Latticework, we've distilled, curated, and interconnected the 750+book summaries from The Rabbit Hole. If you're looking to make the ideas from these books actionable in your day-to-day life and join a global tribe of lifelong learners, you'll love The Latticework. Join us today.